Tempo Punk — Audio Systems Architecture for a Rhythm FPS

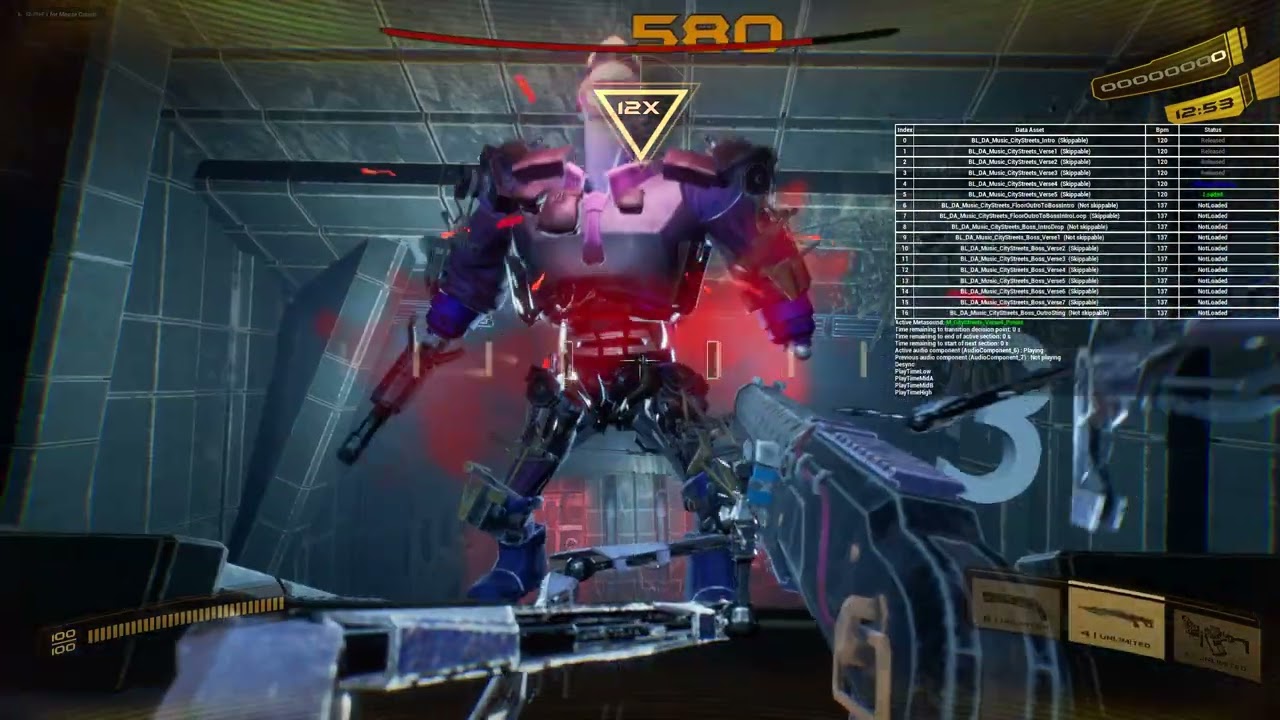

- ▸ Architected and built the Core Rhythm Subsystem in C++ — synchronizing all gameplay events to music with sub-frame accuracy using Quartz timestamps.

- ▸ Designed the MetaSounds Procedural Music System supporting intensity layers, section transitions, and licensed track integration.

- ▸ Built 50+ SFX with beat-accurate timing via BPM-driven stretch/compress — every reload, dash, and shot locks to the active track's tempo.

The Project

Tempo Punk is a rhythm-based first-person shooter set in a cyberpunk world. Music isn’t background — it’s the core gameplay mechanic. Every shot, reload, dash, enemy spawn, and level event synchronizes to the beat. The game features procedurally driven levels, boss fights, an upgrade shop, leaderboards, and a planned music import system.

The game has been showcased at multiple industry events (Digital Dragons, TwitchCon, GameOn, Busan Korea) and has received funding through the EU GameTech accelerator program and Epic MegaGrants.

Links: Steam page

What Shipped

- Showcased at Digital Dragons, TwitchCon, GameOn, and Busan Korea — industry-validated proof of concept

- Secured 15 licensed tracks from 14 artists (~1.5M monthly Spotify listeners) on a €7,000 budget

- Received EU GameTech accelerator funding and Epic MegaGrants backing

- Sub-frame audio accuracy achieved — replaced animation-driven timing with Quartz timestamps, eliminating persistent latency

My Role

I’m the audio systems architect and programmer on a 5-person team. In practice the role extends well beyond audio — I own all audio systems and the game framework systems (GameState, GameInstance, GameMode). I also contribute to gameplay programming, handle music licensing, represent the studio at events, produce trailer audio, and write technical documentation. I manage my own work end-to-end: breaking features into tasks, planning sprints, and managing my workload to meet deadlines.

What I Built

Core Rhythm Subsystem (C++)

The foundational system that synchronizes all gameplay to music. Provides beat events, quantization, accuracy detection, and timing data to every other system in the game.

- Architected and built from scratch in C++ with Blueprint-exposed interfaces

- Implemented Quartz-based beat matching and quantization for frame-accurate audio-gameplay synchronization

- Beat matching for full-beat, half-beat, and near-beat actions with configurable accuracy ranges

- Music re-syncing system to detect and recover from runtime audio desyncs across variable hardware

- Beat counter for licensed tracks with looping sections — maintains accurate global beat position for syncing in-game events to specific song moments

- Audio stream caching and variable transition point systems for seamless music transitions

- Solved persistent audio latency by replacing animation-driven sound timing with a Quartz timestamp-based system, achieving sub-frame accuracy

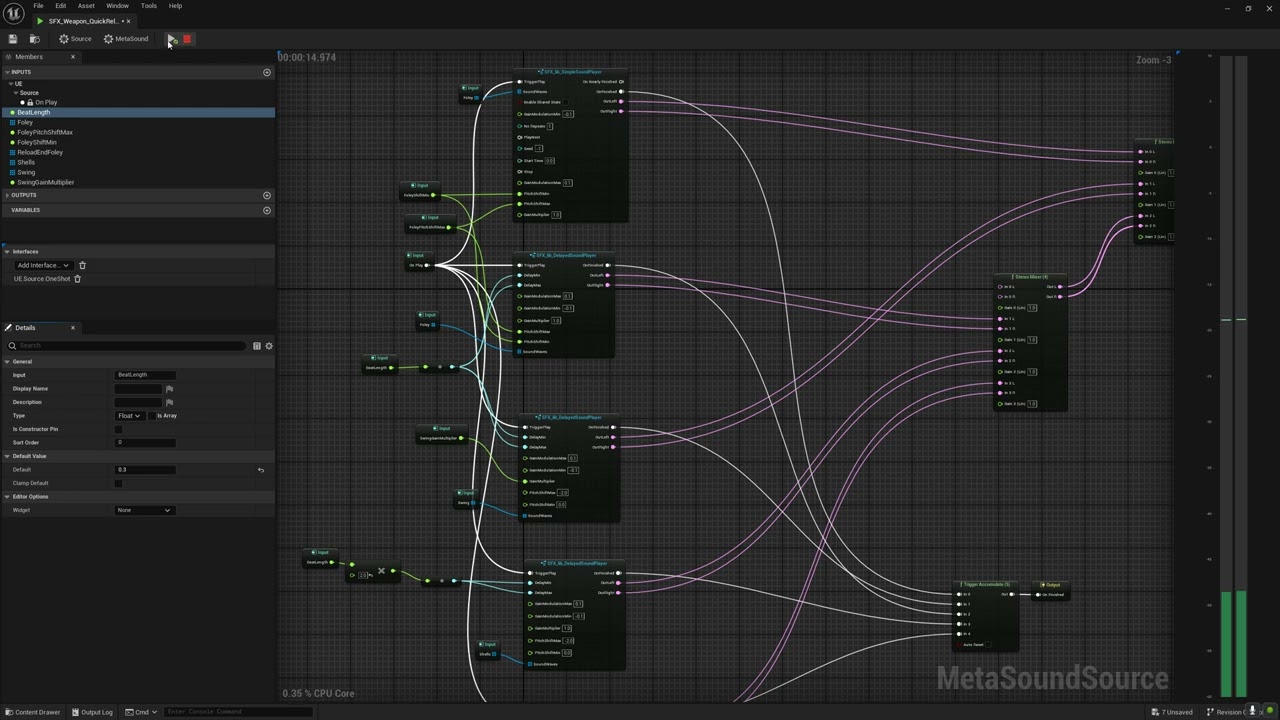

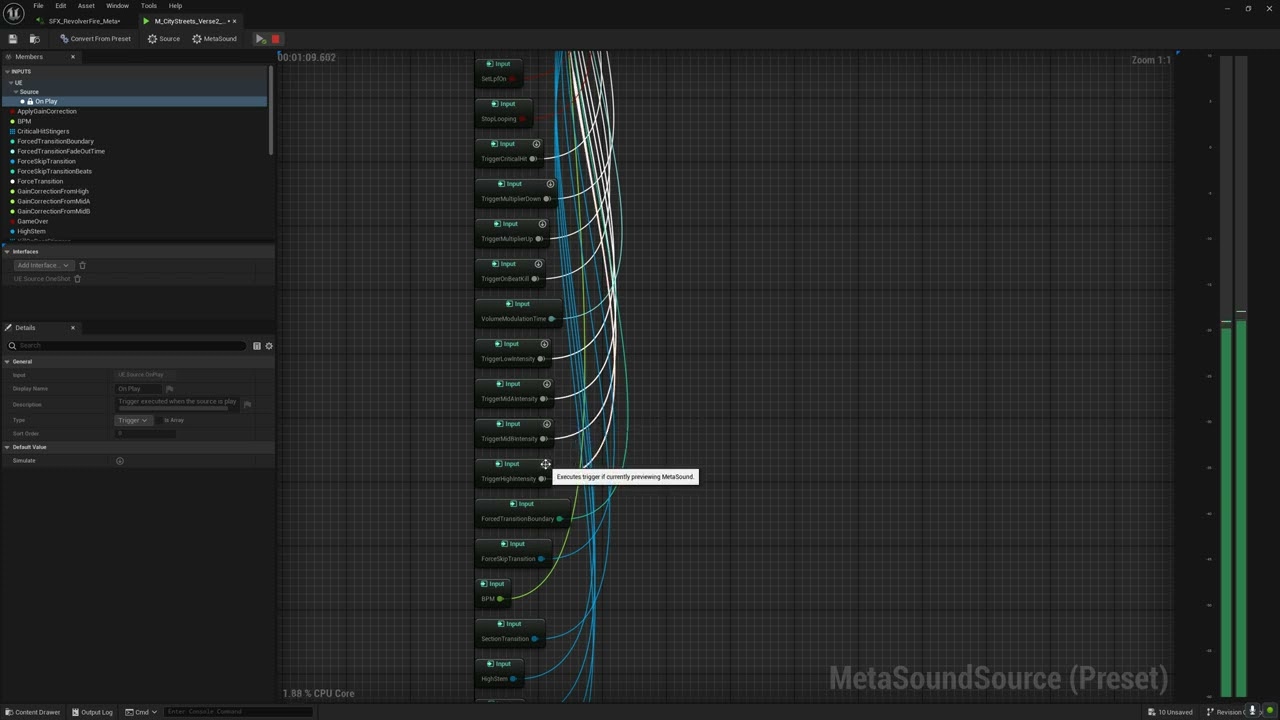

Procedural Music System (MetaSounds)

Generates and manages dynamic music that responds to gameplay state.

- Built from scratch using Unreal MetaSounds

- Designed the Music Manager architecture supporting multiple concurrent tracks, intensity layers, section transitions, and integration with both procedural (generated) and licensed music

- Licensed track integration pipeline: breaking down tracks into stems, creating procedural versions, implementing looping, building beat charts

- Created documentation for external music producers explaining how the procedural music system works

The Music Manager handles two key runtime behaviors — switching intensity layers based on combat state, and managing transitions between song sections. Both are demonstrated below:

Sound Design & Implementation

Designed and implemented 50+ sound effects across weapons (shotgun, revolver, mortar, pistol), player actions (footsteps, jump, dash, slide, damage, death), enemy AI (death, hit, spawn, melee, projectile), bosses (voice stingers, explosions, rocket barrage), and environment (city ambience, doors, UI). Produced “Mother” voice performances for trailer and tutorial.

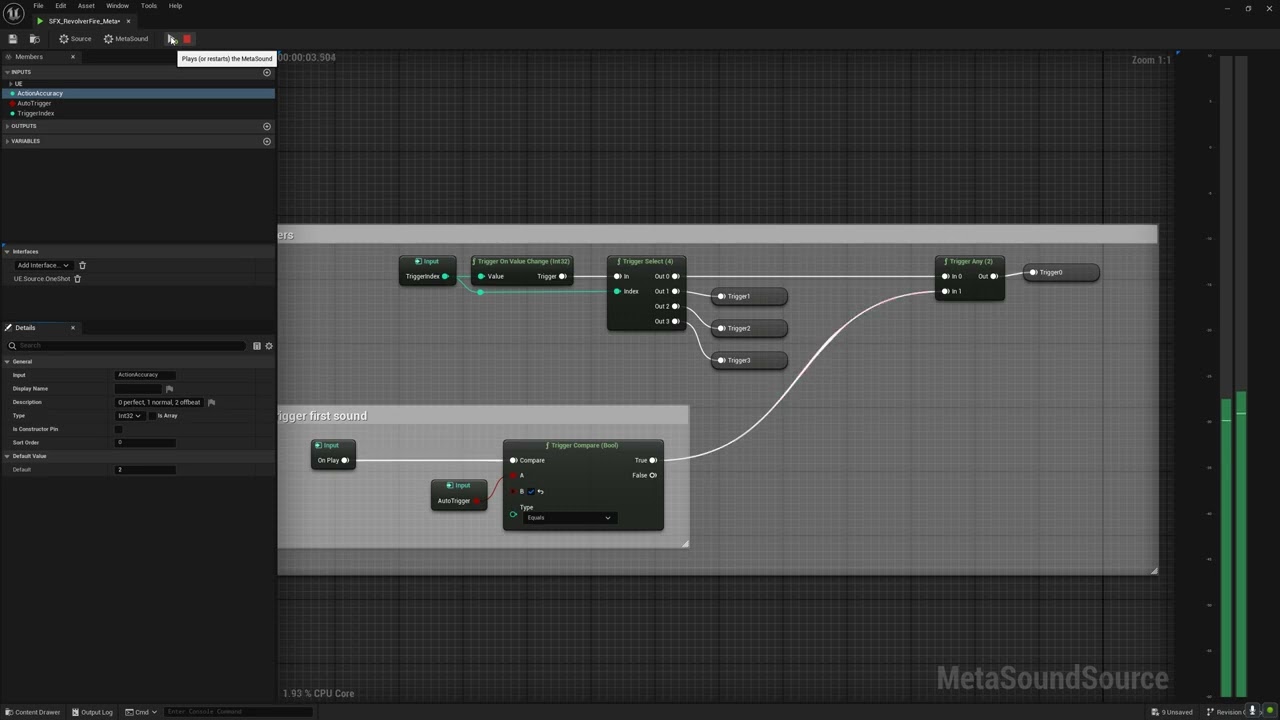

The SFX implementation relies on custom MetaSound templates I built for three core behaviors:

Accuracy-reactive sound playback — Weapons produce different SFX depending on how close the player’s shot is to the beat. A perfect-beat hit, a near-miss, and an off-beat shot each trigger distinct sound variations, giving immediate audio feedback on timing accuracy:

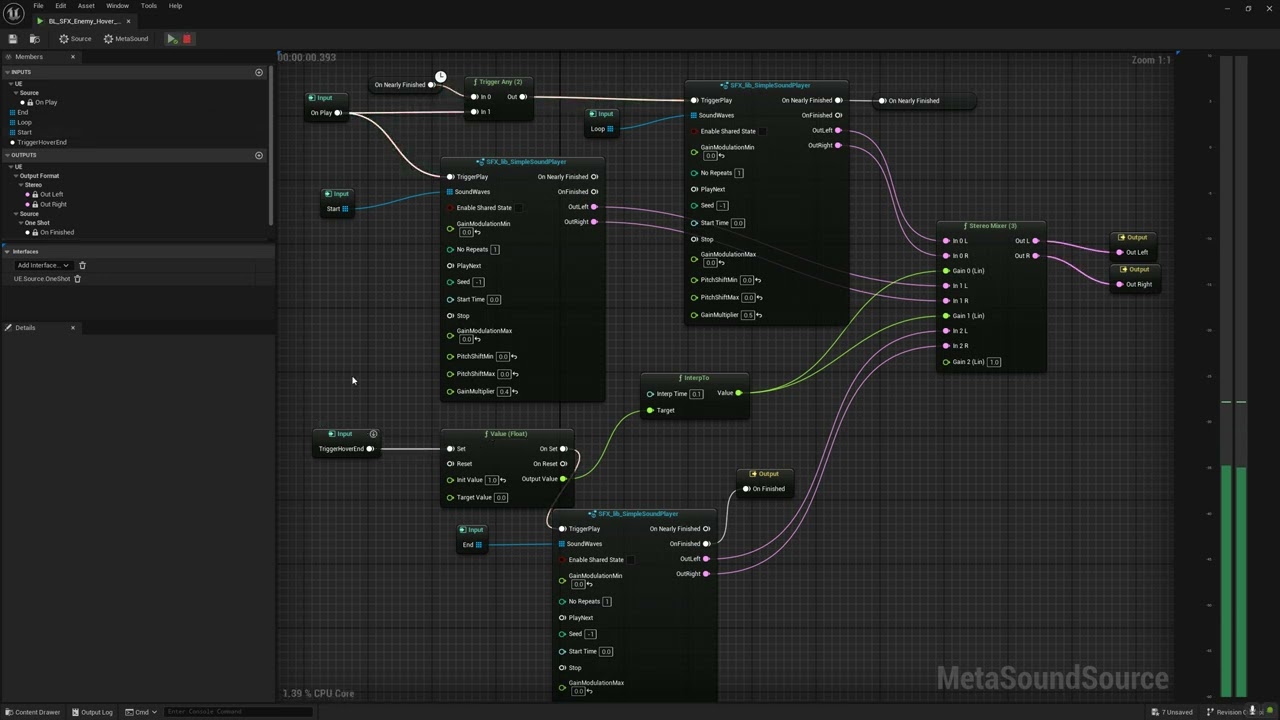

Looping sounds with tails — Sustained sounds (like a hovering enemy) need to loop cleanly during gameplay and then play a natural fade-out tail when the source stops. The MetaSound template handles the transition from loop to tail seamlessly:

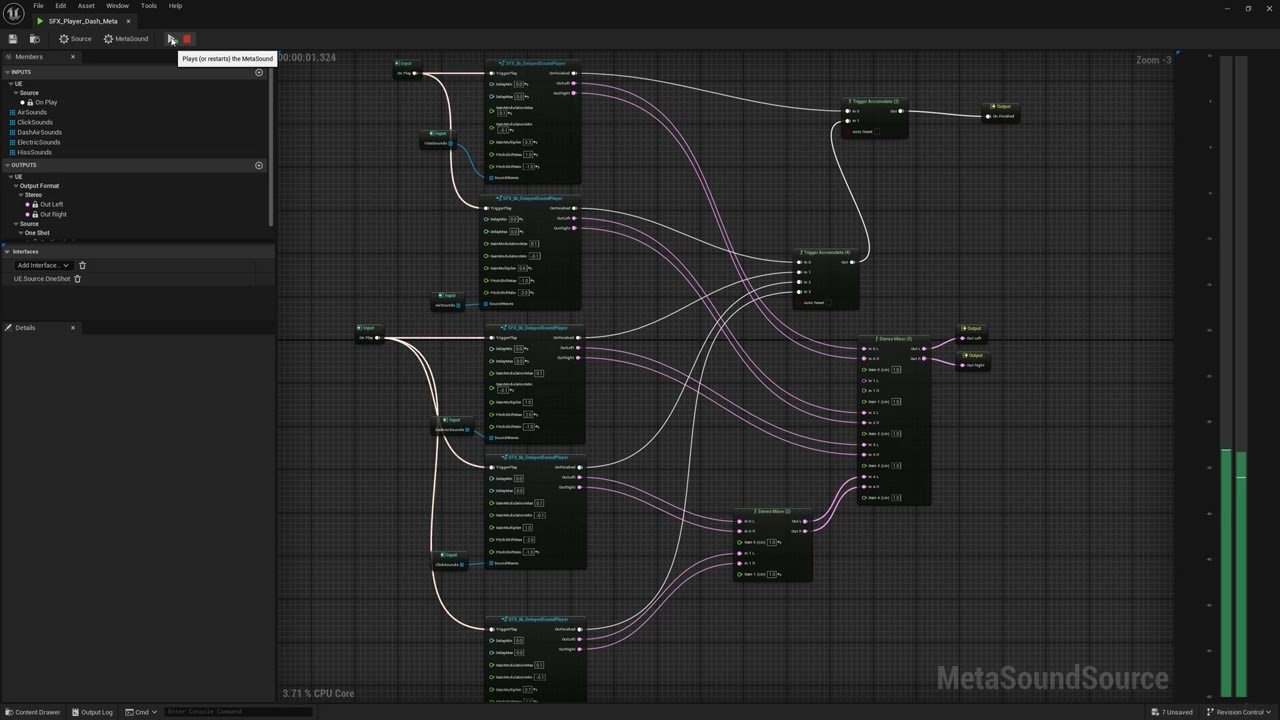

Randomized modulation for repeating sounds — Sounds that fire frequently (footsteps, rapid-fire weapons, UI ticks) need variation to avoid listener fatigue. The template applies randomized pitch, timing, and modulation per trigger:

Audio Mixing & Spatialization

Designed and implemented the full mixing system from scratch: sound class hierarchy, control bus routing, submixes, parameter patches, attenuation, concurrency rules. Built a music compressor. Multiple full mixing passes across the project lifecycle. Audio priority hierarchy for managing dozens of simultaneous sources during combat.

Convolution reverb — Implemented a convolution reverb system (built twice — once initially, once after a full audio refactor). Volume-based reverb zones shape the acoustic character of each space in the game:

3D spatial audio — Full spatialization system for positional sound sources. The visualization below shows how sound sources are placed and attenuated in 3D space:

Randomized spatial ambience — A system for placing ambient sound emitters throughout levels with randomized positioning and timing, creating a living soundscape without manually scripting every source:

Gameplay Programming

- Implemented the Game Core architecture: GameState, GameInstance, GameMode (game launch, level launch, level travel transitions, level selection flow, audio options)

- Designed and built the score system (then redesigned it from scratch based on playtester feedback across 5+ events)

- Developed a Google Analytics subsystem in C++ for tracking demo player behavior and progress metrics and implemented in-game.

Pipeline & Tooling

- Custom Reaper DAW script for generating beat charts from audio files, enabling level designers to build mini-floors from any licensed music track

- Phase-cancellable metronome system for clean game footage capture

- Music system debug widget with play state tracking

Key Challenges & Solutions

Frame-accurate rhythm synchronization across hardware: The entire game depends on audio and gameplay being synchronized to within a fraction of a frame. Replaced an early animation-driven timing approach with a Quartz timestamp-based system.

Supporting both procedural and licensed music: The game needed to work with dynamically generated music and pre-existing licensed tracks through a single unified system. Designed the Music Manager architecture to abstract away the source — both types feed into the same rhythm subsystem, beat matching, and intensity layer logic.

Music licensing on a tight budget: Approached ~100 artists, labels, and publishers. Secured 15 licensed tracks from 14 artists (combined ~1.5M monthly Spotify listeners) on a €7,000 budget by drafting all synchronisation agreements from scratch and designing a milestone-based payment structure.

Tools & Tech

Unreal Engine 5.3, C++, Blueprints, MetaSounds, Quartz, Reaper, Ableton Live Suite, Google Analytics, Steam SDK, ClickUp, ElevenLabs

Need audio systems that stay locked to gameplay state?

Tell me what you're building and what you need. I typically respond within 24–48 hours.

Start a Conversation